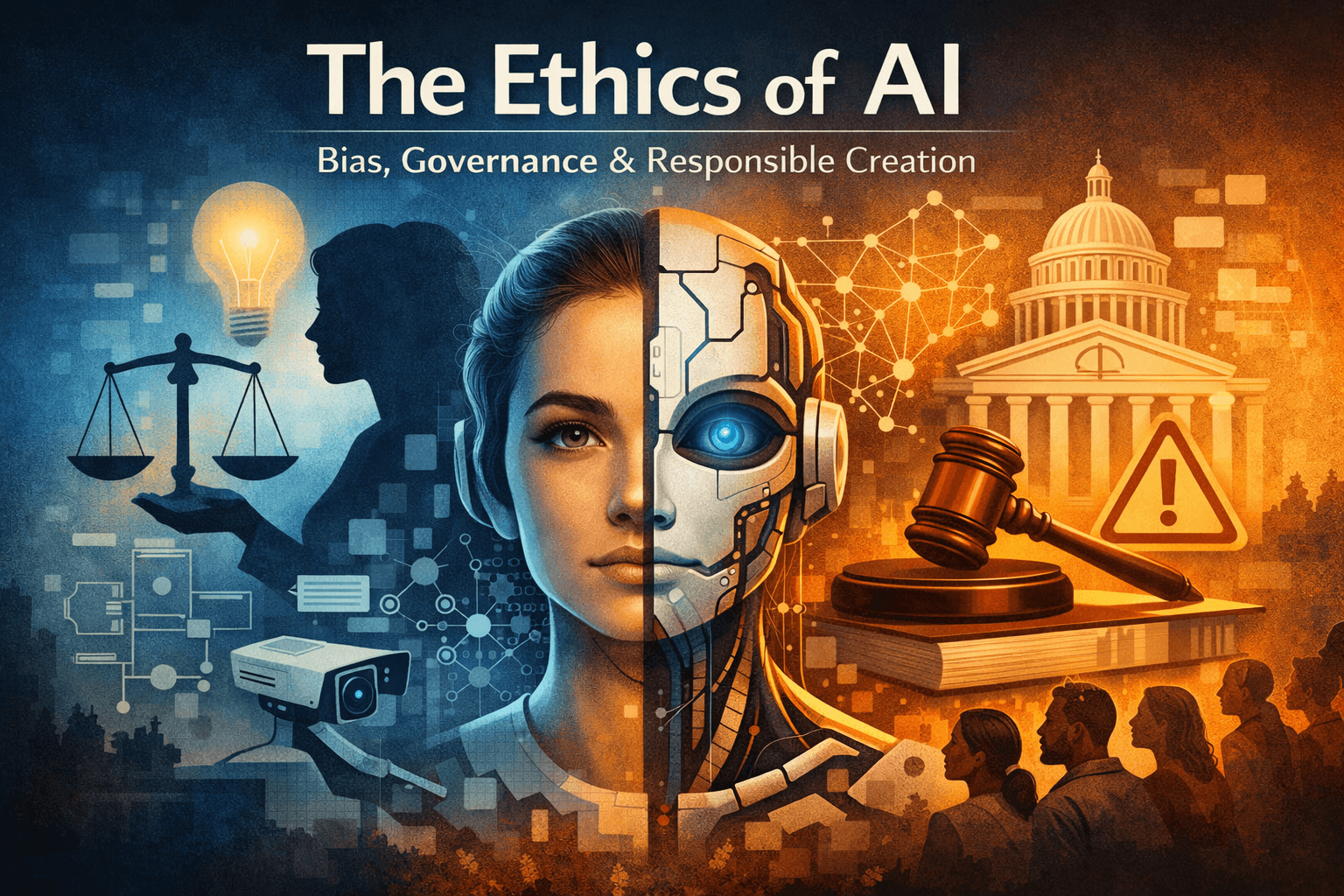

The Ethics of AI: Bias, Governance, and Responsible Creation

Understanding how bias, accountability, and design choices shape the future of artificial intelligence

AI is not neutral — and pretending it is might be our biggest mistake.

Artificial Intelligence is already deciding:

Who gets a loan

Who gets shortlisted for a job

What news you see

How police patrol neighborhoods

Which voices are amplified — and which are silenced

Yet many people still believe AI is objective, logical, and fair.

That belief is dangerously wrong.

AI doesn’t think.

AI doesn’t judge.

AI reflects us — our data, our values, and our blind spots.

And that’s where ethics begins.

What Do We Mean by “AI Ethics”?

AI ethics is not about slowing innovation.

It’s about directing power responsibly.

At its core, AI ethics asks three fundamental questions:

Is the system fair?

Who is accountable when it fails?

Should this system exist at all?

These questions become urgent when AI systems scale to millions — or billions — of people.

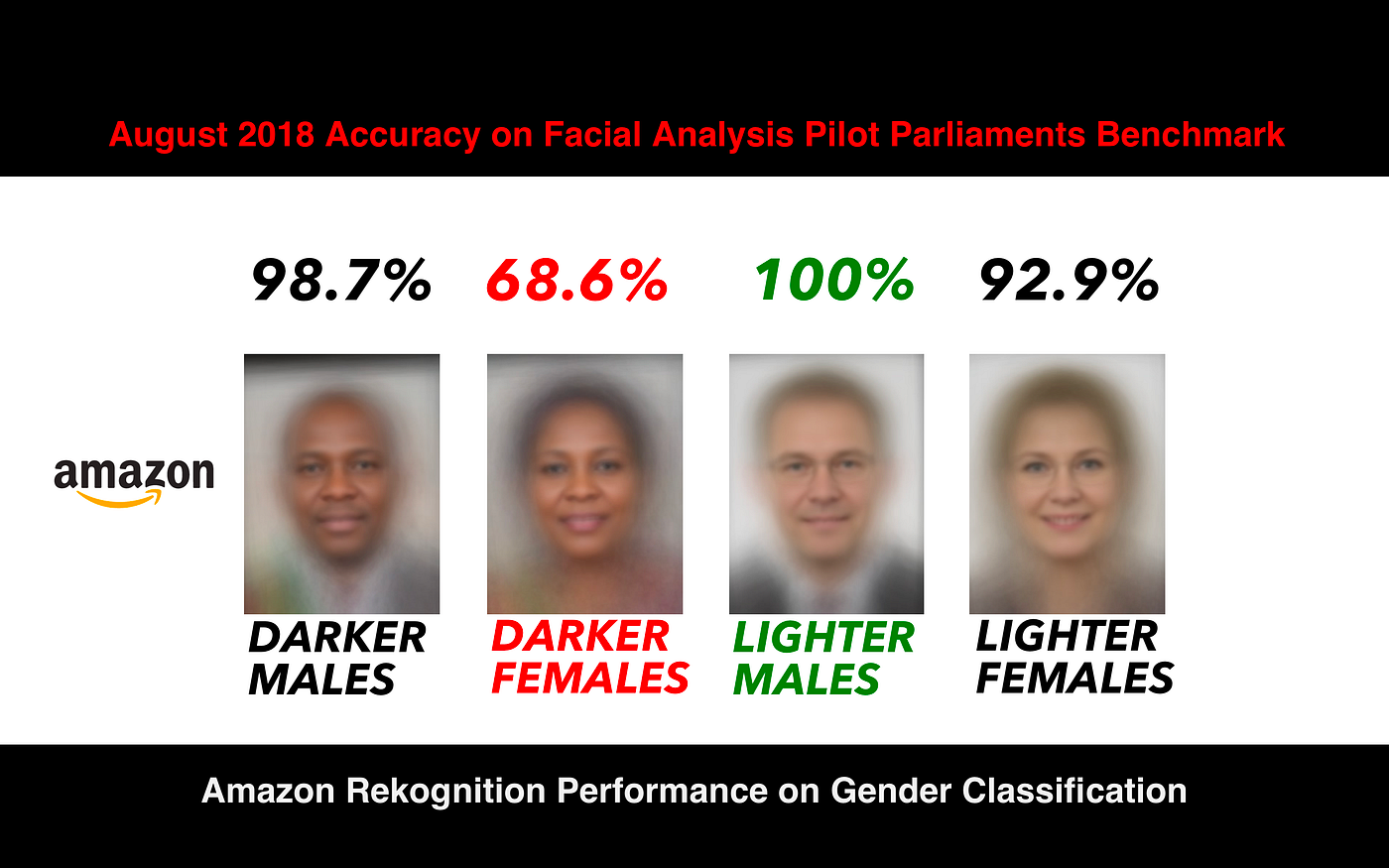

1️⃣ Bias in AI: The Problem We Keep Underestimating

AI learns from data.

Data comes from humans.

Humans are biased.

That simple chain explains most ethical failures in AI.

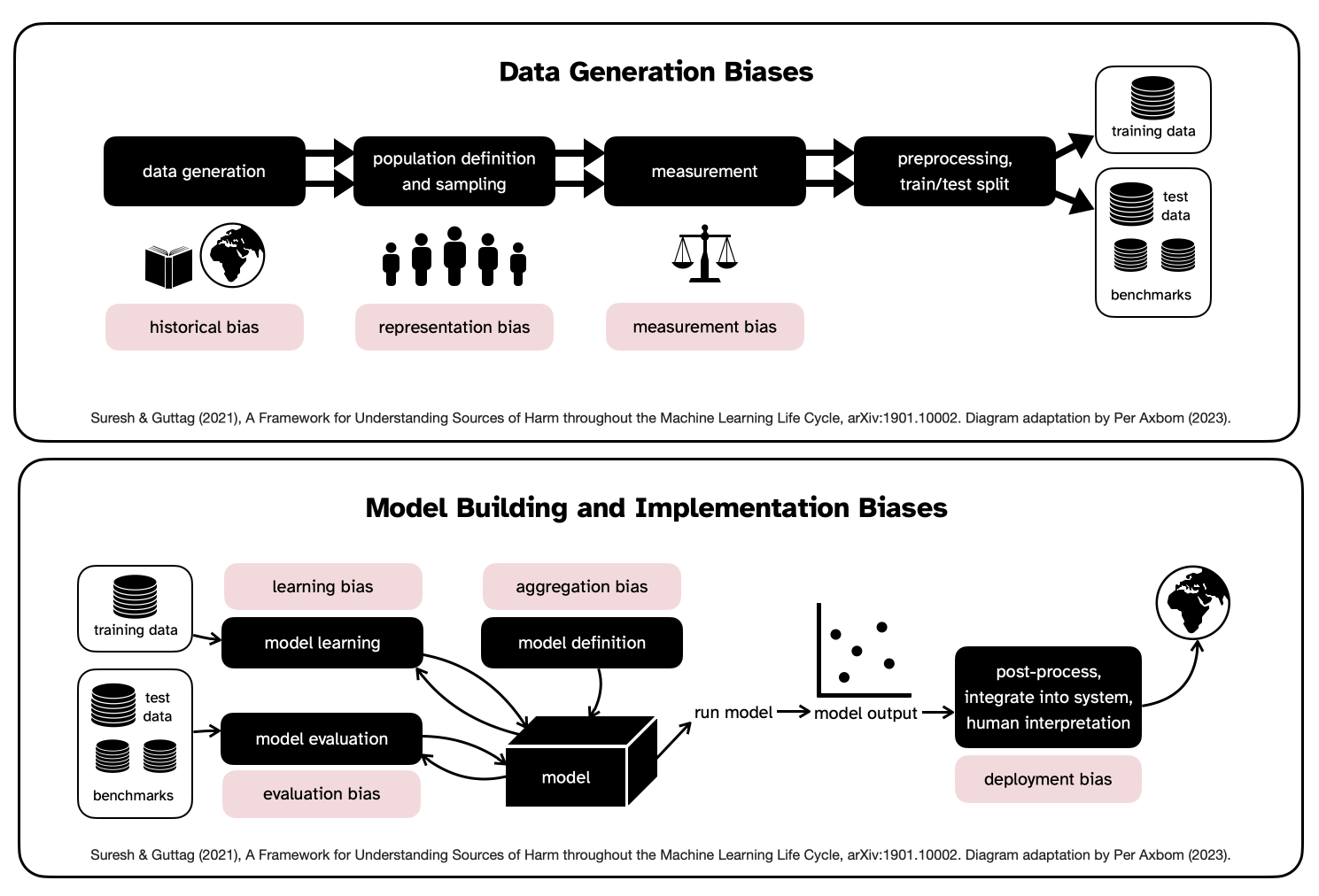

How Bias Enters AI Systems

Bias can appear at every stage:

Data collection → underrepresentation of certain groups

Data labeling → human prejudice encoded as “ground truth”

Model design → assumptions built into algorithms

Deployment → systems used outside their original context

Example:

If a hiring model is trained on historical data from a male-dominated industry, it may learn that being male correlates with success — even if gender is never explicitly included.

The result?

Qualified candidates are filtered out

Discrimination scales automatically

No single human feels responsible

Why Bias Is Harder to Fix Than It Sounds

Many assume:

“Just remove the biased data.”

But bias is often:

Statistical, not obvious

Structural, not intentional

Contextual, not universal

Blindly “cleaning” data can:

Reduce accuracy

Introduce new unfairness

Hide problems instead of solving them

Ethical AI requires measurement, transparency, and continuous auditing — not one-time fixes.

2️⃣ AI Governance: Who Controls the Power?

Modern AI operates in a gray zone:

Too complex for users to understand

Too fast for laws to keep up

Too powerful to leave unchecked

This creates a dangerous imbalance:

Those who build AI hold enormous power over those affected by it.

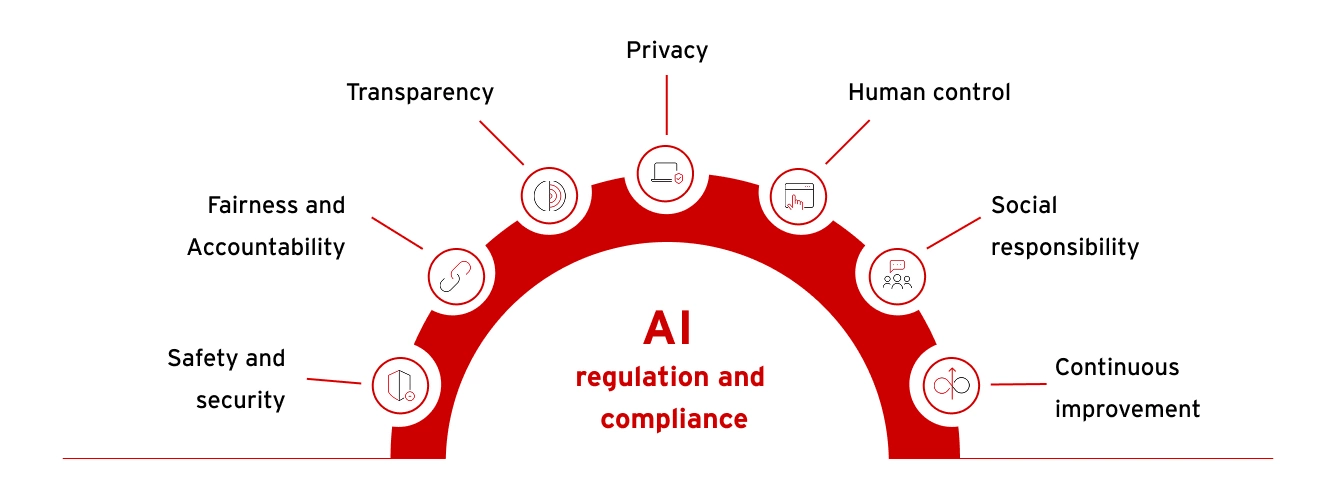

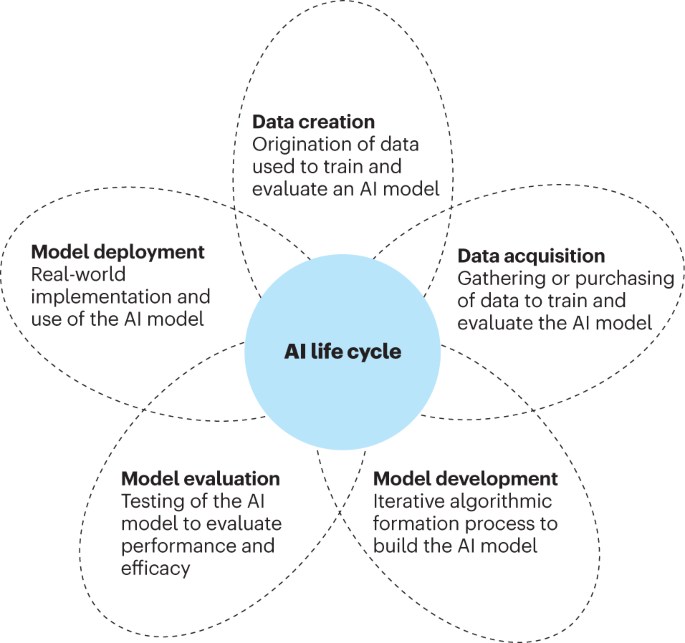

What Is AI Governance?

AI governance refers to the rules, standards, and oversight mechanisms that ensure AI is developed and deployed responsibly.

Strong governance answers questions like:

Who approves AI systems?

Who audits them?

Who can shut them down?

Who is liable when harm occurs?

The Accountability Gap

When AI systems fail, blame becomes unclear:

The developer?

The company?

The data provider?

The end user?

Without governance, responsibility dissolves — and victims are left without answers.

Ethical governance demands:

Clear ownership

Explainable decision pathways

Documented model behavior

Independent audits

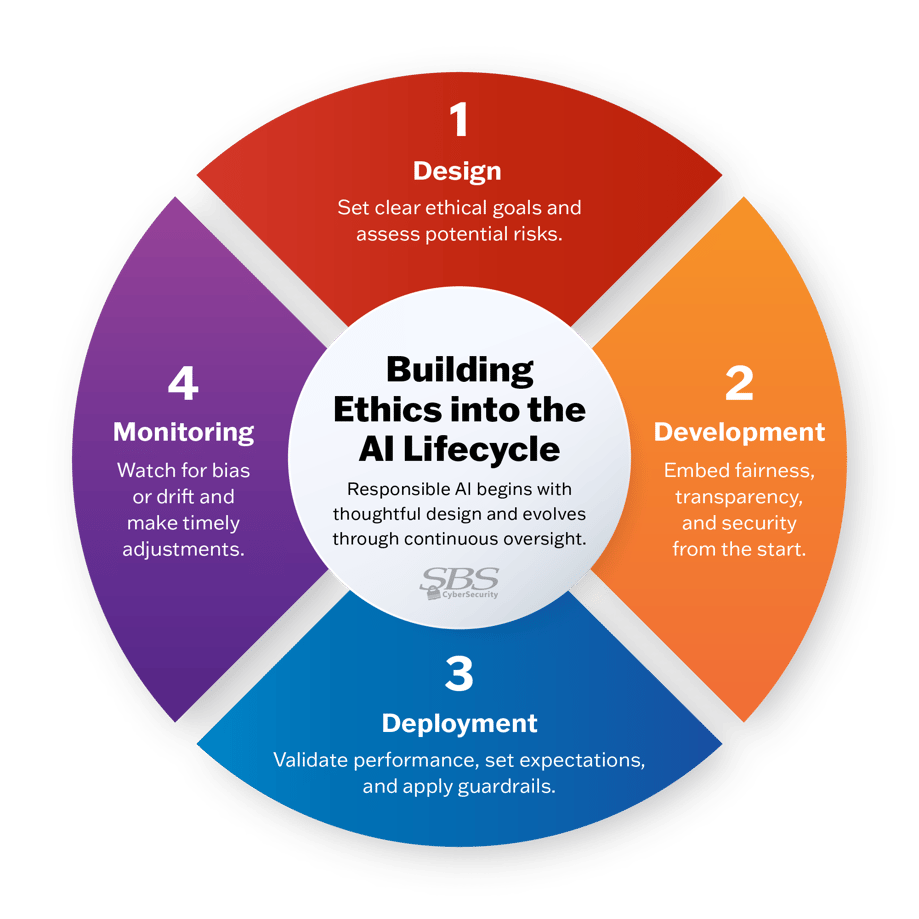

3️⃣ Responsible AI Creation: Ethics by Design

The biggest ethical mistake is treating ethics as a final checklist.

True responsibility begins before the first line of code is written.

Principles of Responsible AI

1. Purpose Limitation

Ask:

Why are we building this?

Not:

Can we build this?

Some problems should not be automated.

2. Human-in-the-Loop

High-stakes decisions should never be fully automated, such as:

Medical diagnoses

Legal judgments

Financial exclusion

AI should assist humans — not replace accountability.

3. Transparency & Explainability

If users cannot understand:

Why a decision was made

What data influenced it

Then the system should not be trusted with serious outcomes.

4. Privacy by Default

Ethical AI:

Collects minimal data

Avoids unnecessary retention

Protects users even from the system itself

Privacy is not a feature.

It is a baseline responsibility.

5. Continuous Monitoring

Ethics is not static.

Models drift.

Data changes.

Society evolves.

Responsible AI requires ongoing evaluation, not one-time approval.

The Hard Truth: Ethical AI Is Slower — and That’s a Good Thing

Unethical AI moves fast:

Faster deployment

Faster scaling

Faster profits

Ethical AI moves deliberately:

With review

With friction

With accountability

Speed without ethics leads to:

Public backlash

Regulatory crackdowns

Loss of trust

In the long run, trust is the most valuable AI asset.

Who Is Responsible for Ethical AI?

The uncomfortable answer: everyone involved.

Developers → design responsibly

Companies → prioritize long-term impact

Governments → regulate wisely

Users → demand transparency

Ethics is not a blocker to innovation.

It is what makes innovation sustainable.

A Simple Ethical AI Test

Before deploying any AI system, ask:

Could this system cause harm at scale?

Would I accept this decision if it affected me?

Can the decision be clearly explained?

Is there a way to appeal or override it?

Are we willing to take responsibility if it fails?

If any answer is “no” — stop and rethink.

Final Thought: The Future of AI Is a Moral Choice

AI will shape:

Economies

Democracies

Human opportunity

But technology does not choose values.

We do.

The real question is not:

“Can AI be ethical?”

The real question is:

“Will we choose to make it so?”

📢 If this article helped you:

Share it with someone building AI

Start ethical conversations early

Build technology that respects humanity